Improving real-time emotion recognition system in assistive communication technologies for disabled persons using deep learning with equilibrium algorithm

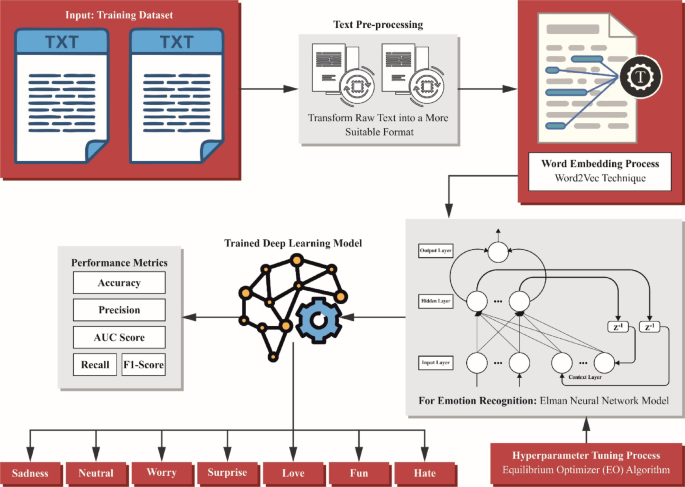

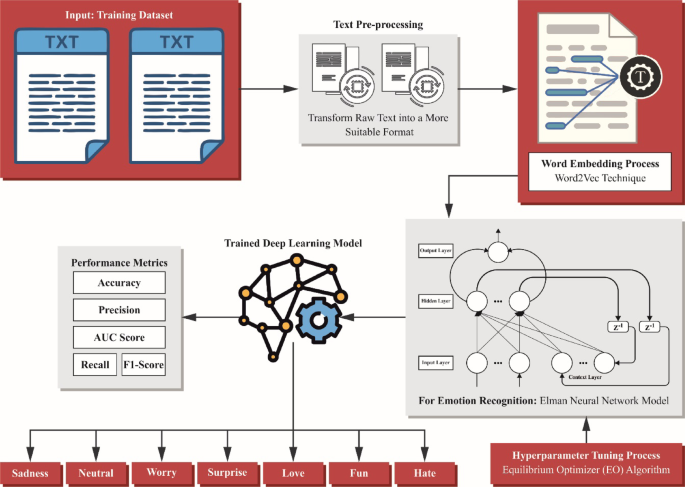

In this paper, a novel SERDP-DLEOCE method is introduced. The SERDP-DLEOCE method is designed as an advanced approach for emotion recognition in text to facilitate enhanced communication for people with disabilities. Figure 1 portrays the workflow of the SERDP-DLEOCE technique.

Workflow of SERDP-DLEOCE method.

Text pre-processing

Text pre-processing is an essential stage in emotion recognition, as it prepares raw textual data for analysis by eliminating noise and normalizing the input18. Efficient pre-processing guarantees that the text data is in a systematic format appropriate for feature classification and extraction. The method typically includes many phases, all intended to deal with particular challenges in textual data.

-

1.

Tokenization: This phase splits the input text into small components, like sentences or words, to enable study. It assists in breaking down composite sentences into controllable units for additional processing.

-

2.

Lowercasing: To remove case sensitivity, each text is transformed to lowercase. This guarantees that words like \(\:Happy\) and \(\:happy\) are considered equal, minimizing redundancies in the data.

-

3.

Removal of stop words: Normally applied words, namely, \(\:is\), \(\:and\), \(\:the\), and \(\:of\), are eliminated as they give little to the emotional or sentiment content. This decreases noise and concentrates on the words, which transfer meaningful context.

-

4.

Removal of punctuation and special characters: Special characters and punctuation marks are eliminated to streamline the text and guarantee uniformity. Nevertheless, in similar cases, particular punctuation marks might be maintained after they convey emotion (for example, exclamation marks).

-

5.

Lemmatisation and stemming: Words are limited to their root or base forms. For instance, \(\:running\) becomes\(\:\:run\), guaranteeing reliability in the example for related words.

-

6.

Handling negations: Negations, namely \(\:not\:happy\), are recognized and considered as individual concepts for preserving the projected sentiments.

By performing these pre-processing methods, emotion recognition systems can successfully examine textual data, enhancing the precision of downstream tasks such as feature classification and extraction, and word embedding.

Word embedding process

Afterwards, the Word2Vec is employed for word embedding to capture semantic meaning by mapping words to dense vector representations19. This model effectually captures semantic relationships between words by mapping them into dense vector representations. This technique also provides a continuous, low-dimensional space that preserves meaningful linguistic patterns, allowing the model to comprehend context and similarity in language. The capability of the model in both syntactic and semantic information makes it superior to other embedding models. Furthermore, this is an ideal technique for balancing performance with resource constraints, and hence is computationally efficient and scalable for massive datasets. This balance between accuracy and efficiency justifies its use in the proposed framework.

Word2Vec is the word embedding approach commonly used in NLP for text classification. Its primary aim is to characterize words as dense vectors within the constant vector area. For numerous reasons, with its capability to capture semantic relationships among words, Word2Vec is confirmed as a valuable tool for text classification. Using Word2Vec for text classification provides many advantages, including its ability to determine semantic relations between words. Therefore, the classifier performs well on the tasks of classification as it may acquire a better knowledge of the meanings of words in the text. It is introduced to characterize a word as a constant-valued vector in this higher-dimensional vector space. The two main models are a Continuous Bag of Words (CBOW) and Skip-gram.

(i). CBOW: According to the contextual words near targeted words, it tries to predict such terms. For maximizing the probability of accurately forecasting the targeted word \(\:w\_t\) provided its contextual words \(\:w\_c\), whereas c travels from \(\:-C\) to \(\:C\) as exposed in Eq. (1)

$$Maximise~\left( \right)\Sigma \left( {P\left( {} \right)} \right)$$

(1)

\(\:\left(1\right)\)

Whereas T indicates each of the targeted words within the training data.

The likelihood of forecasting the targeted word, given its contextual words, is demonstrated by utilizing the functions of softmax exposed in Eq. (2):

$$P\left( {t{w_ – }c} \right)=\frac{{exp\left( {{\theta _ – }{w_ – }t \cdot {\varphi _ – }{w_ – }c} \right)}}{{\Sigma \left( {exp\left( {{\theta _ – }w.{\varphi _ – }{w_ – }c} \right)} \right)}}~for~all~words~in~the~vocabulary$$

(2)

Here, \(\:{\theta\:}_{-}\)w_t: Target word embedding as vector representations of the targeted word \(\:w\_t\). \(\:{\phi\:}_{-}{w}_{-}c\): The contextual word \(\:w\_c\) (contextual word embedding) is characterized as vectors. Dot \(\:\left(\bullet\:\right)\) product among dual vectors.

b. Skip-gram: For estimating the contextual words for a targeted word, it is applied. The aim is to improve the probability of precisely forecasting the targeted word \(\:\left({w}_{-}t\right)\) specified by the contextual word \(\:\left({w}_{-}c\right)\) exposed in Eq. (3).

$$Maximize\left( {\frac{1}{T}} \right){\text{*}}\Sigma \left( {{\text{Log}}P\left( {{w_c}{\text{|}}{w_t}} \right)} \right)$$

(3)

The function of the SoftMax is applied to signify the probability of accurately guessing the contextual word given the target exposed in Eq. (4)

$$P\left( {{w_ – }c{\text{|}}{w_ – }t} \right)=\frac{{exp\left( {{\theta _ – }{w_ – }c \cdot {\varphi _ – }{w_ – }t} \right)}}{{\Sigma \left( {exp\left( {{\theta _ – }{w_ – }c \cdot {\varphi _ – }w} \right)} \right)}}~for~all~words~in~the~vocabulary$$

(4)

Emotion classification using ENN model

For the emotion recognition in text, the ENN model is utilized20. This model is chosen for its robust capability in capturing contextual data and temporal dependencies in sequential data. This technique effectually remembers prior inputs, making it significant in comprehending the flow and variances of language, unlike conventional feedforward networks. This methodology also presents a simple architecture with fewer parameters, making it computationally effective while still efficiently modelling short-term dependencies, compared to more intrinsic techniques such as LSTM or GRU. This balance between performance and efficiency makes ENN appropriate for emotion recognition tasks where capturing the sequence and context of words is crucial for accurate classification.

The ENN is a dynamical recurrent neural network (RNN) with local feedback networks. It usually contains four layers: the context, input, output layers, and hidden layer (HL). The contextual neuron layers relate one-to-one to the HL neurons. The HL output is generated in response to the HL input over the contextual layer’s storage and delay, attaining shorter-term memories and searching for local information. This tool characterizes a particular NN object, summarising the network’s weights, biases, training parameters, and structure, enabling fast utilization of the Elman code for calculation and investigation.

The mathematical formulation for its networking architecture is provided as shown:

$$y\left( k \right)=g\left( {{w_3} \cdot x\left( k \right)} \right)$$

(5)

$$x\left( k \right)=f\left( {{w_1} \cdot {x_C}\left( k \right)+{w_2}\left( {u\left( {k1} \right)} \right)} \right)$$

(6)

$${x_C}\left( k \right)=\alpha x\left( {k – 1} \right)+x\left( {k – 1} \right)$$

(7)

Here, \(\:x\) stands for an n-dimensional vector of the intermediate layer node unit; \(\:y\) refers to an m-dimensional vector of the output node. \(\:{x}_{c}\) denotes \(\:n\:\)dimensional feedback state vector;\(\:\:u\) signifies \(\:r\)-dimensional input vector;\(\:\:{w}_{1}\) characteristics connected weight; \(\:{w}_{2}\) symbolize connected weighting from an input to intermediate layers;\(\:{\:w}_{3}\) represents connected weighting from the middle layer to the output layer; \(\:0\le\:\alpha\:<1\) indicates the self‐feedback gain feature; \(\:f(\bullet\:)\) epitomizes the transfer function of the intermediate layer neuron, typically utilizing Sigmoid and\(\:\:g(\bullet\:)\) means an output neuron’s transfer function, demonstrating the linear mixture of the outputs of the intermediate layer.

Parameter optimizer using EO

Finally, the EO approach adjusts the hyperparameter values of the ENN approach optimally and results in greater classification performance21. This approach is chosen for its capability in effectually exploring and exploiting the search space, resulting in optimal parameter settings. This model also converges more efficiently and avoids getting trapped in local optima, unlike conventional techniques such as grid or random search, thus improving the overall performance of the model. The method also effectively balances exploration and exploitation dynamically, and its physics-inspired optimization mechanism allows for effective tuning. This results in an enhanced accuracy while mitigating the computational overhead, making EO appropriate and effective for improving the ENN model.

The EO is a heuristic optimizer algorithm. This theory is represented using the following equation: The rate of change in mass is computed as the rate of inflow minus the rate of outflow, resulting in the final mass.

During this EO model, particles characterize solutions, and their attentions are applied to searching parameters. All particles try to discover an equilibrium point arbitrarily from a particular collection of best solutions within the exploration area. Attention directs the location of the solution in this regard. The model effectively directs particles to this equilibrium place to discover optimum solutions. The equilibrium candidates are successively applied to build a vector, which imitates the set of equilibria that is theoretically described as demonstrated:

$$\left\{ {\begin{array}{*{20}{l}} {{X_{eq}}\left( {Iter} \right) \in \left\{ {{X_{eq1}}\left( {Iter} \right), \ldots ,~{X_{eq4}}\left( {Iter} \right),~{X_{eq,ave}}\left( {Iter} \right)} \right\}} \\ {D\left( {Iter} \right)=X\left( {Iter} \right) – {X_{eq}}\left( {Iter} \right)} \\ {{X_1}~(Iter={X_{eq}}\left( {Iter} \right) – D\left( {Iter} \right).F+\frac{{{G_\alpha }}}{{{\lambda _\alpha }}}.\left( {1 – {F_\alpha }} \right)} \end{array}} \right.$$

(8)

Here, \(\:{X}_{eq1}\left(Iter\right),\dots\:,{X}_{eq4}\left(Iter\right)\) represent controller variables similar to the four best-fitted people in generation \(\:Ite{r}^{th}\:{X}_{eq,ave}\left(Iter\right)\) specifies the average value of these four individuals. \(\:X\left(Iter\right)\) are selected arbitrarily amongst these individuals. In every cycle, all particle locations are upgraded by arbitrarily choosing one such option with equivalent likelihood. For instance, in the initial testing, the primary particle can update its position according to \(\:{X}_{eq1}\left(Iter\right)\), then in the next iteration, the average value \(\:{X}_{eq,ave}\left(Iter\right)\) may be applied. Using the result of the optimizer method, all particles should be upgraded to an equivalent repeatedly for each of the possible solutions. \(\:\lambda\:\) refers to a random vector distributed uniformly in the interval of \(\:\left[\text{0,1}\right],\) \(\:F\) denotes a randomly generated vector described by the relations provided in Eq. (9), and \(\:G\) is another arbitrary vector shown in Eq. (10).

$$F=2sign\left( {r – 0.5} \right).\left( {{e^{l\lambda }} – 1} \right)$$

(9)

$$\left\{ {\begin{array}{*{20}{c}} {G={G_0}.F} \\ {{G_0}=GCP.\left[ {{X_{eq}}\left( {Iter} \right) – \lambda .X~\left( {Iter} \right)} \right]} \\ {GCP=\left\{ {\begin{array}{*{20}{c}} {0.5{r_1}~~{r_2} \geqslant GP} \\ {0~~~~{r_2}

(10)

\(\:r\) stands for a randomly generated vector by a uniform distribution across the component interval. \(\:{r}_{1}\) and \(\:{r}_{2}\) represent randomly formed values through a uniform distribution inside the component interval. \(\:l\) denotes the parameter presented by Eq. (11).

$$l={\left( {1 – \frac{{Iter}}{{{\text{~Max}} – iter}}} \right)^{\frac{{lter}}{{{\text{~Max}} – {\text{iter}}}}}}$$

(11)

The fitness selection refers to a substantial factor that manipulates the performance of the EO model. The hyperparameter range procedure comprises the solution-encoded technique for evaluating the effectiveness of the candidate solution:

$$Fitness~={\text{~max~}}\left( P \right)$$

(12)

$$P=\frac{{TP}}{{TP+FP}}$$

(13)

Here, \(\:TP\) and \(\:FP\) signify the true and false positive values.

link